The Accountability Loop: Systems That Govern Themselves

Creating workflows that know you better than you know yourself

Your systems work. Your environment enforces them.

Attribution template won’t let you skip fields. Verification checklist blocks delivery until complete. Workspace design keeps integrity resources visible.

The infrastructure is solid.

Then you discover a bypass.

Not illegal. Not unethical. Just... simpler. A way to mark the checklist complete without actually doing the verification.

Your systems have a vulnerability: you designed them.

And because you designed them, you know exactly how to circumvent them when the pressure gets high enough.

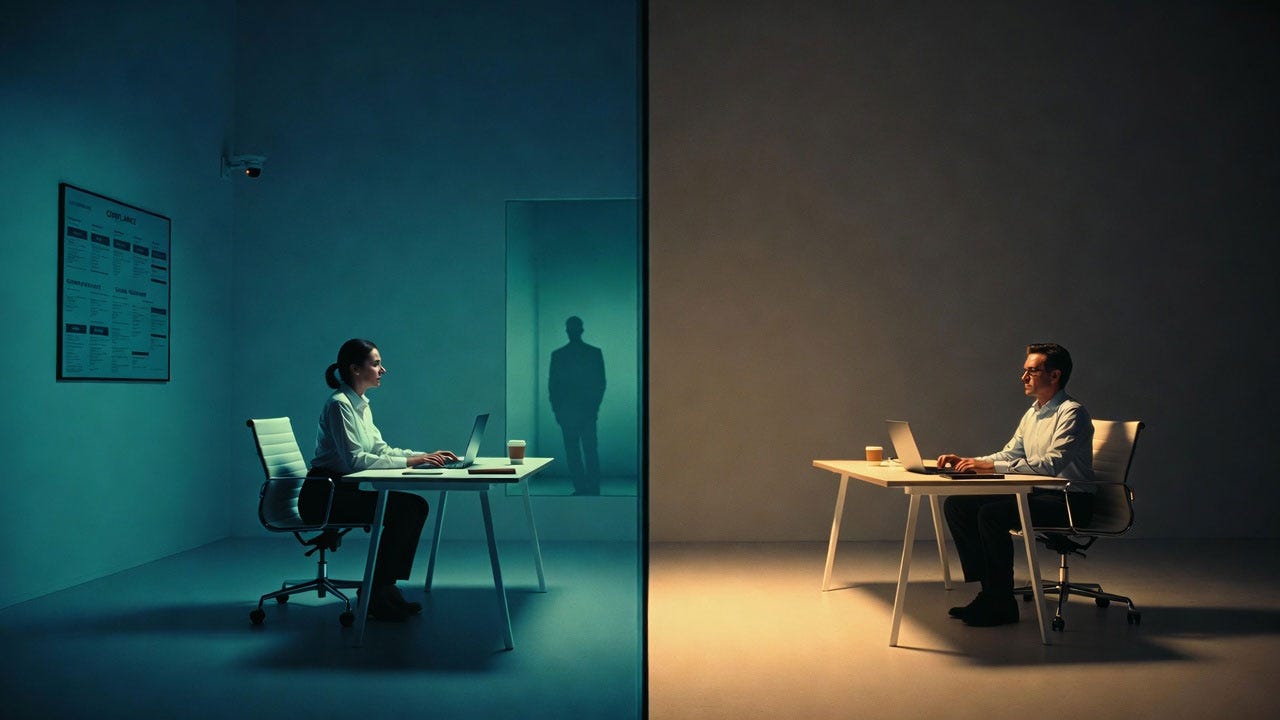

You’re not building systems for external surveillance. You’re building systems that govern themselves through internal accountability loops.

Systems where shortcuts create immediate, costly consequences—not to your reputation, but to your capability.

Systems where the fastest path to real success requires maintaining integrity, not performing it.

External accountability asks “Did you follow the process?” Internal accountability asks “Can you actually do this?” The second question can’t be faked.

Why External Accountability Fails

Here's what happens when you try to legislate integrity: you get compliance theater. The more sophisticated your surveillance apparatus becomes, the more sophisticated the deception that evolves to satisfy it. This isn't a failure of character—it's a fundamental misunderstanding of human nature. We are pattern-recognition machines optimized for survival, and when survival means passing inspection rather than building capability, we optimize for passing inspection. Every time.

The audit becomes the enemy, not the pathway to excellence. And so you end up with organizations full of people who are brilliant at appearing competent while systematically hollowing out their actual capacity to perform.

They're not bad people. They're people trapped in bad systems—systems that mistake surveillance for accountability, compliance for competence, and performance for substance. And the tragic irony is that the more resources you pour into oversight, the more you guarantee the very deception you're trying to prevent.

Most organizations try to maintain integrity through external oversight: managers checking work, peer review requirements, audit trails, performance monitoring.

This creates a fundamentally broken incentive structure: The goal becomes appearing to maintain standards rather than actually building capability.

Internal accountability operates differently.

The consequences of shortcuts aren’t “getting caught”—they’re systematic capability degradation that you can’t hide from yourself. You know immediately when you’ve skipped verification because your work quality drops. You know when you’ve bypassed systems because your cognitive load increases.

The accountability loop closes internally, automatically, inevitably. Not through surveillance.

Through consequence.

The Three Accountability Loops

Self-governing systems require three interconnected feedback loops that make shortcuts immediately costly to the person taking them.

Loop 1: The Competence Mirror

Problem: You can fake attribution. You can skip verification. You can claim understanding you don’t have.

Solution: Systems that require you to use what you claim to know.

Implementation:

Every project generates two outputs:

The deliverable (goes to client/stakeholder)

The methodology document (stays with you)

The methodology document requires:

What you learned (specific, testable claims)

What capability you built (what you can now do without AI)

What you can teach (to a hypothetical junior colleague)

What would break (if AI disappeared tomorrow)

The accountability: You have to read your own methodology documents.

When starting a new related project, your methodology from the last one is your starting point. If you faked it then, you’ll discover it now—not because someone audits you, but because you need that capability for current work.

Example:

The accountability: When you need that claimed capability for your next project, fake documentation becomes immediately costly. You're caught by your own need, not by an auditor.

Loop 2: The Complexity Tax

Problem: Shortcuts look efficient in the moment because they hide their long-term costs.

Solution: Systems that make those costs visible immediately.

Implementation:

Track three metrics for every project:

Time to completion (how long it took)

Cognitive load (how much mental bandwidth required)

Revision cost (how much time spent on clarifications, fixes, defenses)

Then calculate the True Cost:

True Cost = Initial Time + (Cognitive Load × Stress Multiplier) + (Revision Cost × Compounding Factor)

Integrity-based work: 10 hours initial + low cognitive load + minimal revisions = ~11 total hours

Shortcut-based work: 5 hours initial + high anxiety + exponential revision costs = ~47 total hours

When you track true cost honestly, shortcuts stop looking efficient.

When you track true cost honestly, shortcuts stop looking efficient.

The accountability: The math is visible to you in real-time.

Not next quarter in a performance review. Right now, in your actual experience. You feel the cognitive load. You track the revision costs. You see the compound effect.

The loop closes through honest self-measurement, not external judgment.

Loop 3: The Capacity Mirror

Problem: You can maintain the appearance of productivity while systematically degrading actual capability.

Solution: Systems that track capability growth separately from output volume.

Implementation:

Monthly capacity audit (15 minutes, private):

What can I do now that I couldn’t 90 days ago?

What work would become impossible if AI tools disappeared?

What parts of my reputation are borrowed vs. built?

Then compare to output growth:

Healthy Pattern:

Output increasing

Capability increasing

Dependency stable or decreasing

Dangerous Pattern:

Output increasing

Capability stable

Dependency increasing

When these metrics diverge, you’re not building a career—you’re borrowing one.

The accountability: You can’t lie to yourself about the numbers.

Not because someone’s auditing. Because you need increasing capability for increasing responsibilities. When your output grows but capability doesn’t, future opportunities start requiring competence you don’t have.

The loop closes when opportunity meets reality.

Building Self-Governing Systems

The alternative to external surveillance isn't chaos—it's internal feedback loops that make deception more costly than honesty. Not more morally costly. More practically costly. Systems where the person taking the shortcut pays the price immediately, directly, inevitably—not through punishment, but through the natural consequences of degraded capability.

This is how nature works. Touch a hot stove, learn immediately. Ignore structural integrity, building collapses on you specifically. Skip verification, lose the ability to defend your work when it matters most. The feedback is instantaneous, the lesson is indelible, and there's no appeals process where you can argue your way out of consequences.

What we're building here isn't moral philosophy. It's engineering. We're designing systems where the incentive structure aligns with reality instead of fighting it. Where the path of least resistance leads toward competence instead of away from it. Where "doing it right" isn't virtue—it's efficiency correctly calculated over the actual timeframe that matters.

Here’s how to implement accountability loops that don’t require external oversight.

Phase 1: Methodology Documentation (Week 1)

Create a private “Methodology Journal”:

For every significant project, document:

PROJECT: [Name]

DATE: [Completion]

WHAT I BUILT

- Deliverable: [What shipped]

- Capability: [What I can now do]

- Learning: [What's mine now, not borrowed]

HOW I BUILT IT

- AI Collaboration: [Specific, honest]

- Verification Method: [What I actually did]

- Competence Applied: [What I contributed beyond AI]

WHAT I CAN TEACH

- To junior colleague: [Specific methodology]

- To peer: [Strategic insights]

- To future self: [What to remember]

WHAT WOULD BREAK

- If AI unavailable: [Dependencies]

- If asked to explain: [Gaps in understanding]

- If need to replicate: [Missing capability]

NEXT TIME

- Do differently: [Lessons learned]

- Build capability in: [Identified gaps]

- Systemize: [Make automatic]The rule: You must review past methodologies before starting related new work.

This makes faking it in Month 1 costly in Month 3 when you need that claimed capability.

Phase 2: True Cost Tracking (Week 2)

Track true cost through real project comparison:

Start simple: Pick two recent projects with similar scope but different approaches.

Example: Analysis A vs. Analysis B

Analysis A (The Integrity Approach): You spent 8 hours doing thorough verification up front. Documented methodology. Double-checked sources. Built defensible foundation. During those 8 hours, cognitive load stayed around 3/10—focused work, nothing to hide, clear attribution. When stakeholder questions came, you spent 1 hour walking them through your documented process with confidence.

Total: 11 hours of low-stress, defensible work.

Analysis B (The Shortcut Approach): You finished in 4 hours by skipping verification steps. Moved fast. Hit the deadline. But cognitive load spiked to 8/10 immediately—managing what you didn’t verify, anxiety about gaps, mental bandwidth consumed by “what if they ask about...” Then stakeholder questions arrived. Without methodology to reference, you spent 12 hours reconstructing work you claimed to have done, defending gaps, redoing analysis under scrutiny.

Total: 40 hours of high-anxiety, defensive work.

Track these three numbers weekly:

Initial time investment

Cognitive load during and after (honest 1-10 scale)

Revision/defense time when questions arrive

Review monthly. Look for patterns:

Which approaches have lowest true cost?

Where are shortcuts actually expensive?

What’s the correlation between initial speed and revision burden?

The math doesn’t lie. When you track true cost honestly—including the cognitive load you carry and the revision time you pay later—shortcuts stop looking efficient.

The data makes the invisible visible.

Phase 3: Capacity Audit (Week 3)

Monthly 15-minute reflection:

Answer honestly (nobody sees this):

Capability Growth:

New skills I own: [Specific]

New problems I can solve: [Specific]

Teaching confidence: [What I could teach]

Dependency Reality:

Work requiring AI: [Percentage]

Work I could replicate: [Percentage]

Borrowed vs. built competence: [Honest ratio]

Gap Analysis:

What I’m claiming to know: [List]

What I actually know: [List]

The gap: [Honest assessment]

Next 90 Days:

Capability to build: [Specific]

Dependency to reduce: [Specific]

Gap to close: [Specific]

Review last month’s audit before writing this month’s.

When you see capability stagnating while output grows, you have advance warning of the competence crisis before it becomes a career crisis.

Together, they create accountability that closes internally, automatically, inevitably.

What Actually Holds Long-Term

Here’s what makes self-governing systems actually work over years, not just months.

Not perfection. Honest feedback.

Your systems should tell you the truth, not make you look good.

When methodology document reveals you don’t actually understand something—good. That’s the system working. Address the gap now rather than discovering it in a crisis later.

When true cost tracking shows a shortcut was expensive—good. That’s data informing future decisions. Take shortcuts consciously, with eyes open to real cost.

When capacity audit shows dependency increasing—good. That’s early warning. Adjust before it becomes structural fragility.

The system isn’t there to judge you. It’s there to give you accurate maps so you can navigate effectively.

The Self-Governance Principle

There’s a test for whether your accountability system is real or performative, and it’s devastatingly simple: Can you game it? Not “is it hard to game”—can you game it at all? Because if you can satisfy your system through appearance rather than substance, through documentation rather than capability, through checking boxes rather than building competence, then what you’ve built isn’t self-governance. It’s a more sophisticated form of the same surveillance you were trying to escape.

Real self-governing systems don’t rely on your virtue. They rely on mathematics. On feedback loops that close internally. On consequences that compound automatically whether anyone’s watching or not. The system doesn’t trust you to be good—it makes being good the most efficient strategy. Not through punishment. Through accurate accounting of actual costs over actual timeframes.

When shortcuts are genuinely more expensive than standards, you don’t need willpower to maintain integrity. You need arithmetic.

If your systems can be satisfied with performance rather than substance, they’re surveillance, not governance.

True self-governing systems:

Can’t be gamed without immediate cost (to you, not your reputation)

Create feedback loops that close internally (you can’t hide from yourself)

Make shortcuts more expensive than standards (the math is visible)

Build toward capability, not just compliance (the work creates the accountability)

When someone asks “how do you maintain integrity when nobody’s watching?” the answer shouldn’t be “I’m very disciplined.”

The answer should be: “My systems make shortcuts immediately costly to me, whether anyone’s watching or not.”

That’s not virtue. That’s engineering accountability that operates automatically.

And automatic accountability compounds.

The Three-Week Journey: Synthesis

This week we built a complete system:

Monday: The Integrity Checklist—Individual systems that force honesty

Wednesday: The Integrity Environment—Workspace architecture that makes systems automatic

Friday: The Accountability Loop—Self-governing feedback that doesn’t require surveillance

Together, they create infrastructure where:

Integrity isn’t something you do—it’s how your environment functions

Standards aren’t maintained through willpower—they’re enforced through design

Accountability isn’t external surveillance—it’s internal feedback loops

Shortcuts become more expensive than integrity—the math is visible

This is how integrity becomes automatic.

Not through character development.

Not through performance management.

Not through external oversight.

Through engineering systems that make the integrity path the efficient path.

Three Paths Through the Noise is published Monday, Wednesday, and Friday mornings, with a synthesis podcast each Sunday evening. It’s not about reacting to AI news—it’s about building frameworks that work regardless of which tools emerge next.

This week’s paths through the noise:

Part One: The Integrity Checklist: Environmental Design That Forces Honesty

Part Two: The Integrity Environment: Workspaces That Build Character

Part Three: The Accountability Loop: Systems That Govern Themselves

Podcast: The System Design: Building Automatic Integrity