The Integrity Checklist: Environmental Design That Forces Honesty

When willpower fails, systems make integrity the default choice

If you read last week’s series or listened to the podcast, you understand the mathematics.

You know that integrity under pressure compounds toward freedom while shortcuts compound toward imprisonment. You’ve seen how deadline decisions, attribution temptation, and competence crises reveal what you actually built versus what you performed.

You get it.

And then Monday morning arrives.

Three urgent client requests. AI can handle two of them in 20 minutes if you just copy-paste. The third requires deep thinking you don’t have bandwidth for. Your calendar is bleeding red. Slack is exploding. Your manager needs those slides by noon.

In that moment—that specific, bandwidth-collapsed, nobody-watching moment—all your theoretical commitment to integrity evaporates.

Because willpower is not a system.

And what you need isn’t more motivation to “do the right thing.”

What you need is environmental design that makes the integrity choice the easiest choice.

Systems that force honest assessment. Checkpoints that create accountability when no one’s watching. Frameworks that make shortcuts harder than maintaining standards.

Not because you’re virtuous. Because you’re human.

And humans don’t operate on willpower under pressure—they operate on infrastructure.

Systems that require willpower eventually fail. Systems that build competence eventually become effortless.

Why Willpower Always Fails

Here’s what most integrity discussions get catastrophically wrong: they treat it as a character question when it’s actually an engineering problem.

Willpower is a depletable resource that fails precisely when you need it most—under pressure, when bandwidth collapses, when shortcuts look brilliant, when nobody’s watching.

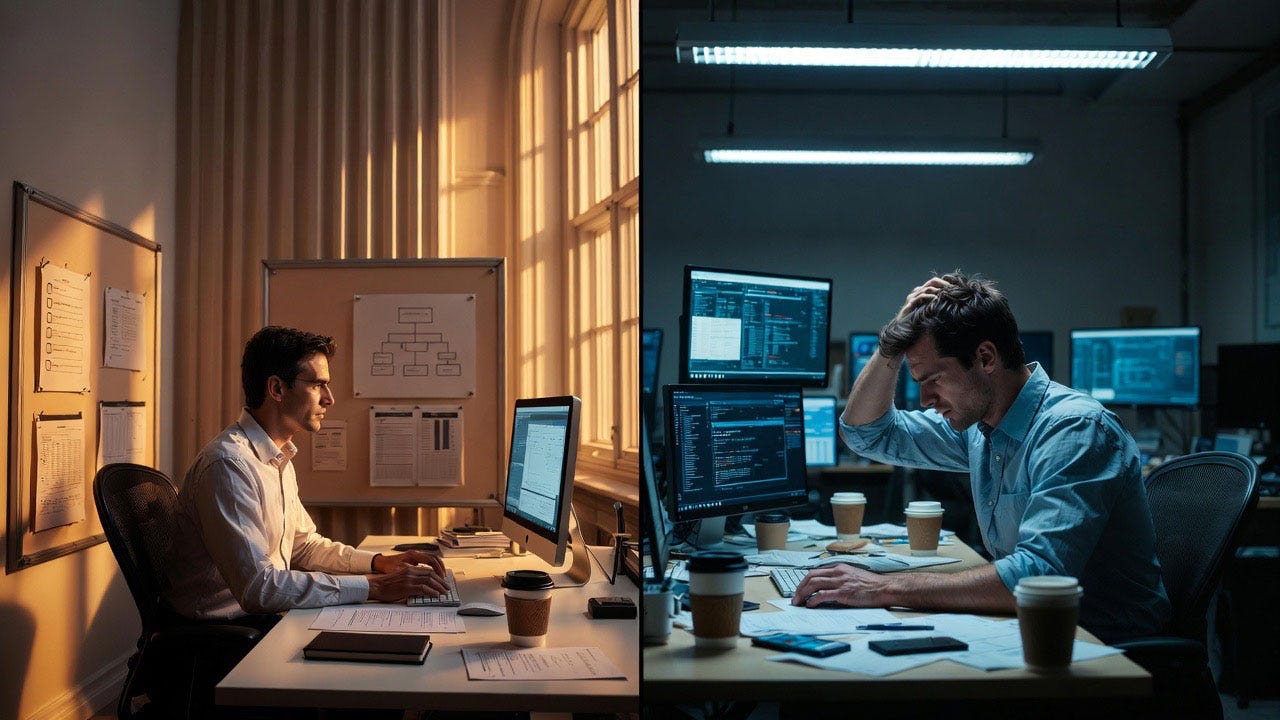

The person relying on willpower looks more moral in Month 1. The person who built systems looks more effective in Month 12.

But only one of them still has integrity intact when the pressure actually arrives.

Because willpower requires conscious decision-making that shuts down under stress. Systems operate automatically regardless of your cognitive state.

The Five System Layers

Environmental design for automatic integrity operates on five layers, each making the next layer easier to maintain.

Layer 1: Pre-Decision Architecture

Don’t rely on making the right choice under pressure. Remove the choice entirely through environmental design.

Template that won’t compile without attribution fields populated:

## Collaboration Statement

Human contribution: [REQUIRED]

AI contribution: [REQUIRED]

Verification method: [REQUIRED]You’re not choosing to maintain attribution—you’re choosing whether to use the template at all.

Verification checklist that creates the final document. The output isn’t “AI response”—it’s “Verified Analysis.” You can’t deliver the verified version without completing the checklist.

Pre-decision architecture doesn’t ask “will you maintain standards?” It asks “do you want the output or not?”

Layer 2: Friction Design

Make shortcuts harder than standards through strategic friction points.

Humans follow the path of least resistance. If maintaining standards is easier than skipping them, humans will maintain standards—not because they’re virtuous, but because they’re efficient.

Low-friction shortcut: Copy AI output → Paste → Send

Higher-friction shortcut: Copy AI output → Remove attribution template → Justify → Paste → Send → Manage anxiety

High-friction integrity: Read → Understand → Verify → Document → Send

Lower-friction integrity: Use template → Check box → Check box → Auto-documents → Send

By making the integrity path systematically easier than the shortcut path, you’re relying on efficiency, not willpower.

Layer 3: Forcing Functions

Build checkpoints that literally cannot be bypassed without explicit, conscious decision to break the system.

Example - Code Review Forcing Function:

Before any AI-assisted code merges:

Automated check: Does commit message explain AI collaboration? (Blocks merge if “No”)

Peer review requirement: Reviewer must confirm: “I understand how AI was used here” (Cannot approve without checking box)

Documentation requirement: README must include AI methodology section (CI/CD fails without it)

You can break the system. But you can’t accidentally bypass it. Every shortcut requires conscious circumvention.

Layer 4: Accountability Artifacts

Create automatic documentation that makes shortcuts visible even when nobody’s actively watching.

System automatically logs every AI query, context, output, verification method, and final decision. Six months later, when someone asks “how did you reach this conclusion?” you have receipts.

Not to defend against accusations—to defend your own clarity about what you actually built.

Layer 5: Compound Feedback Loops

Design systems that get easier to maintain over time, not harder.

Monthly self-audit (15 minutes):

What did I learn this month that I could explain to a junior colleague?

What AI-assisted work could I replicate without AI if needed?

Where am I renting capability vs. building it?

This isn’t performance review prep. It’s competence mapping. And it compounds—each month you track, the clearer your gaps become, the easier it is to address them before they become crises.

The Integrity Checklist

A properly designed checklist isn't a reminder—it's a forcing function that breaks your workflow if you bypass it. The difference matters: reminders can be ignored when you're stressed; forcing functions require conscious circumvention that makes the shortcut immediately visible to yourself.

Here’s a practical framework you can implement this week. Not theory—actual checkboxes that force honest assessment.

Before Using AI:

□ Clarity Check: Can I articulate what I need without AI?

□ Competence Baseline: Could I do this work without AI, even if slower?

□ Verification Framework: Do I understand how to verify AI output is correct?

After Receiving AI Output:

□ Understanding Test: Can I explain this to a colleague in my own words?

□ Verification Execution: Did I actually execute my verification framework?

□ Attribution Clarity: Is my collaboration statement accurate and complete?

Before Delivery:

□ Honest Assessment: Does my attribution accurately represent the collaboration?

□ Competence Reality Check: If asked to explain my methodology, would I be comfortable?

□ Future Self Test: In 6 months, will I be able to defend/explain/replicate this work?

Implementing Your First System

Don’t try to build all five layers this week. Start with one forcing function that addresses your most common integrity risk point.

If your risk is attribution ambiguity:

Build a template that requires three fields before you can deliver:

What I contributed (specific thinking/decisions/frameworks)

What AI contributed (specific assistance/synthesis/suggestions)

How I verified (specific methods/checks/validation)

If your risk is verification skipping:

Build a checklist that creates the deliverable filename:

analysis-UNVERIFIED.pdf → becomes → analysis-verified-[method].pdf

You literally cannot create the final version without completing verification. The filename forces honesty.

If your risk is competence confusion:

Build a monthly 15-minute reflection with three questions:

What did I learn that’s mine now (not borrowed)?

What capability am I renting that I should build?

What would break if AI disappeared tomorrow?

The reflection isn’t performance theater. It’s competence cartography.

Pick one. Implement it this week. Make it automatic before next Monday.

What Actually Compounds

Here's what most people miss about system design: the infrastructure you build in the first month doesn't just maintain standards—it creates capacity that didn't exist before. When your environment handles integrity automatically, your cognitive bandwidth stops being consumed by decision-making about whether to verify, whether to attribute, whether to document.

That bandwidth becomes available for actual thinking. The person on the left isn't just more organized—they're operating with 20-30% more cognitive capacity because their systems are handling what the person on the right is still manually managing through willpower and anxiety.

Systems don’t just maintain integrity—they build capacity.

Month 1: Using attribution template feels like extra work

Month 2: Attribution template reveals patterns in collaboration

Month 3: Patterns inform better AI usage strategies

Month 6: Better strategies reduce verification burden

Month 12: System has evolved into competitive advantage

The person relying on willpower at Month 1 is exhausted by Month 6.

The person who built systems at Month 1 has infrastructure that compounds capability by Month 12.

Systems are how integrity becomes effortless.

Not because you’re virtuous. Because you built infrastructure that makes integrity the path of least resistance.

The System Design Principle

If maintaining integrity requires active thinking, you’re doing it wrong.

Integrity that depends on conscious decision-making collapses the moment cognitive load spikes—which is precisely when you need it most. Proper systems operate automatically regardless of your mental state, your stress level, or how many hours of sleep you got last night.

Integrity should be:

Automatic (happens without conscious decision)

Visible (creates documentation naturally)

Sustainable (gets easier over time, not harder)

Scalable (works under pressure, not just ideal conditions)

When someone asks “how do you maintain standards under deadline pressure?” the answer shouldn’t be “I try really hard to remember.”

The answer should be: “My systems won’t function without them.”

That’s not virtue. That’s engineering.

And engineering compounds.

Three Paths Through the Noise is published Monday, Wednesday, and Friday mornings, with a synthesis podcast each Sunday evening. It’s not about reacting to AI news—it’s about building frameworks that work regardless of which tools emerge next.

This week’s paths through the noise:

Part One: The Integrity Checklist: Environmental Design That Forces Honesty

Part Two: The Integrity Environment: Workspaces That Build Character

Part Three: The Accountability Loop: Systems That Govern Themselves

Podcast: The System Design: Building Automatic Integrity